Insurers pause to take pulse of medical AI

Reprints

Artificial intelligence and machine learning technologies may help reduce medical errors and improve patient outcomes, but their use in health care raises liability concerns and questions around medical malpractice risks.

Insurers are not yet restricting coverage for AI-related risks, but some underwriters are asking health care providers about their use of AI and how it may be applied in a clinical setting.

Bias, data privacy and cybersecurity, and the possibility for some AI tools to give inaccurate results, are among the concerns, experts said. State, federal and international regulations that would govern the use of AI and algorithms are quickly being developed, further complicating the risk landscape.

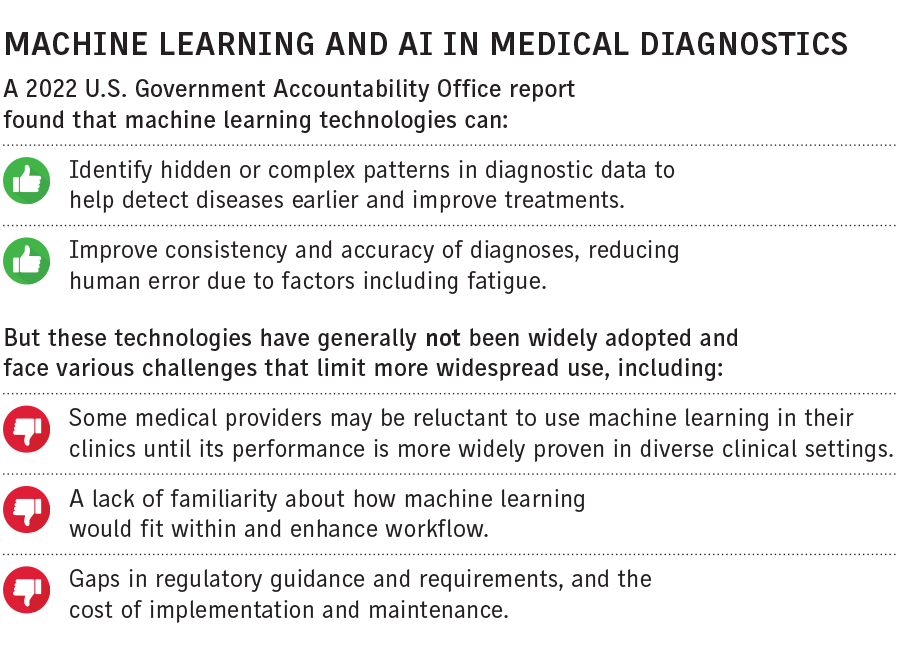

Since 1999, the U.S. Food and Drug Administration has authorized more than 520 AI and machine learning-enabled medical devices.

Most health systems are taking a cautious approach to implementing AI, said Martha Jacobs, Pittsburgh-based national health care practice leader at Aon PLC.

For many providers “there is a deliberately instituted pause on bringing some of that technology into the care and clinical setting,” she said. “There’s not this wholesale rush to pull in AI at this stage because there’s still so much unknown about it.”

With the introduction of any new technology or medical procedure there’s a learning curve, said Lainie Dorneker, Miami-based head of healthcare at Bowhead Specialty Underwriters Inc.

Providers should ensure that AI is used appropriately, Ms. Dorneker said. “You still need to involve patients and apply it to an individual patient. It’s important to realize medical judgment still needs to be employed,” she said.

Applying the appropriate standard of care will be critical, she said. The standard of care is evolving, experts said.

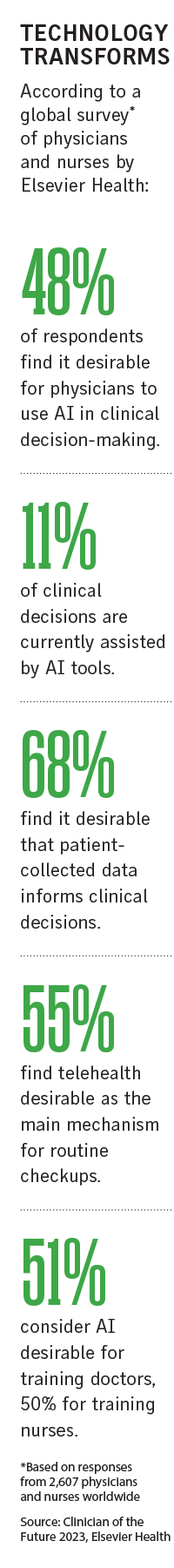

Clinician appetite for using tools such as generative AI models like ChatGPT, which passed the U.S. Medical Licensing exam, is growing.

Some 48% of clinicians globally back using AI in clinical decision-making, but only 11% of clinical decisions are assisted by AI tools, according to the Clinician of the Future 2023 survey by Elsevier Health published last month. But despite widespread hesitancy, AI is increasingly being used for scanning, diagnosing and monitoring patients in the U.S., the survey found.

AI tools in medical imaging have helped radiologists detect cancers for decades and some providers are using chatbots and virtual assistants to triage patients, easing physician fatigue, several experts said.

The industry must figure out the bias, the regulatory complexities, and the responsible use of AI as a tool in health care “because the focus has to be patient safety, but there are ethical considerations,” said Deepika Srivastava, executive vice president of medical professional liability for The Doctors Co. in Napa, California.

For example, large language models sometimes “hallucinate” and provide comprehensive answers to medical queries that appear convincing but may lack accuracy, Ms. Srivastava said.

“It requires careful verification, not blind reliance on AI-generated content,” she said.

AI is a helpful tool, “but it needs to be monitored and not necessarily relied upon because it’s only as good as the data that’s out there and the machine learning that’s being done,” said Dennis Cook, president of healthcare for Liberty Mutual Insurance Co. in Boston.

Contractual provisions, including indemnification and additional insured clauses, can help policyholders mitigate the risks, experts said.

“That transfer of risk is very important, so that the AI and tech companies take responsibility for their liability and actions,” and health care providers take responsibility for what they’re doing, Mr. Cook said.

Liability concerns

As health care organizations weigh the benefits and risks of integrating AI systems into their operations, there are added liability concerns.

The liability landscape is complicated, said Andrew Dallamore, eHealth product manager for the U.S. at CFC Underwriting Ltd. in London.

Medical providers within certain organizations may feel pressured to defer to the output of the AI algorithm, even if that deviates from their professional medical opinion, he said.

In some cases, providers may not be permitted to reject the output of the clinical algorithm, or there could be a lag in the patient’s care because they need a supervisor’s permission to deviate from the AI, Mr. Dallamore said.

“Even in cases where they can deviate, if they’re incorrect they could face disciplinary action on the basis that the patient can incur bodily injury from their error,” he said.

If providers use AI tools to assist in diagnosing and treating illnesses and the AI generates false information, there’s clearly a risk of a medical malpractice claim, said Aaron D. Coombs, Washington-based counsel at Pillsbury Winthrop Shaw Pittman LLP.

“Physicians or nurses could be held liable if there’s a misdiagnosis that results in bodily injury to a patient as a result of them using technology to aid the decision,” he said.

Other parties and lines of coverage could come into play, Mr. Coombs said. For example, AI or technology service providers could be “on the hook for providing services that don’t work properly,” he said (see related story).

These issues have yet to be tested in court, experts said.

In terms of misdiagnosis or missed triage of patient complaints, liability will fall squarely within the medical malpractice arena for now, said Hara Helm, Charlotte, North Carolina-based strategic healthcare risk advisor within Marsh LLC’s U.S. healthcare practice.

But if a design flaw in an AI system leads to an incorrect diagnosis or other issues that cause patient harm, then that could become a product liability issue, she said.

Directors and officers liability exposures might arise if a health care organization implements a bot to triage patient issues and a mistake occurs, Ms. Helm said. Associated cyber liability could arise if a hack or breach in the system were to disrupt how it functions, she said.

John Farley, managing director of the cyber practice at Arthur J. Gallagher & Co., noted personally identifiable or protected health information is subject to regulatory requirements.

Without having permission to share certain highly sensitive data, organizations “could be subject to privacy liability, and that may manifest in class action lawsuits, or regulatory investigations, fines and penalties,” Mr. Farley said.

As tech vendors get more aggressive in collecting data to build their tools, more issues are arising, said Jeffrey Ganiban, Washington-based partner at Faegre Drinker Biddle & Reath LLP. Health care providers must be careful not to share data with an algorithm that would violate patient privacy rights, he said.

Med mal questions

Med mal coverage has not yet been directly affected by the increased use of AI in health care, but some insurers are starting to ask policyholders questions about it, several experts said.

Peter Kolbert, senior vice president and chief claims officer for Healthcare Risk Advisors, part of TDC Group, in New York, said medical professional liability or hospital professional liability coverage will apply and defend physicians even when AI is a big factor in a misdiagnosis because “in 2023 the physician is still responsible for the diagnosis.”

So far, there have been few claims against equipment manufacturers or programmers or developers of AI, “though I strongly suspect that there have been some and, in the future, if there is a problem, they may be brought into the litigation as third-party defendants,” Mr. Kolbert said.

Physicians and health care facilities are subject to a distinct credentialing and review process, and underwriters assume they can deal with all aspects of patient care, whether via telemedicine or other technology, including AI, said Stuart Freeman, Birmingham, Alabama-based national healthcare practice leader at USI.

Whether the doctor used an X-ray or should have ordered an MRI or a CT scan, is a question of medical judgment. “Questions of medical judgment are typically covered under a medical malpractice policy,” Ms. Dorneker said.

Underwriters are curious about how AI tools are being used, including how they may minimize staff burnout, she said.

If AI-related claims emerge, or if it becomes the next risk frontier, “we will start to see different kinds of underwriting questions being asked or maybe applications asking more pointed questions about the use of AI,” she said.