Artificial intelligence poses risk to HR practices

Reprints

Artificial intelligence can be a valuable tool for employers in functions such as hiring, but it also poses significant risks of inadvertent discrimination, experts warn.

The use of generative AI tools — including ChatGPT — which learn patterns and relationships from massive amounts of data, has exploded to more than 100 million users, according to a report released in June by the U.S. Government Accountability Office.

In the employment context, AI is most likely to be used in hiring but is expected to play a greater role in related functions, including layoffs, promotions and succession planning.

“It’s quicker to have an AI source review all (job candidates’) resumes and sort them than pre-interview candidates. It makes the process quicker” and involves fewer human resources staff, said Mary Anne Mullin, New York-based senior vice president at QBE Insurance Group Ltd.

But it still requires some human oversight, experts say. The issue has attracted the attention of Congress and federal regulators as well as some state and local legislative bodies (see related story below).

Its use will increase as the technology becomes more sophisticated, said Joni Mason, New York-based senior vice president and national executive and professional risk solutions practice leader for USI Insurance Services Inc.

Employers “want to make sure that their algorithm doesn’t discriminate against different groups,” said Scott M. Nelson, a partner with Hunton Andrews Kurth LLP in Houston.

He said a general, if simplistic, rule of thumb is the “4/5 rule,” under which a selection practice is considered to have a disparate impact if the selection rate for a certain group is less than 80% that of the group with the highest rate.

In a putative class-action lawsuit filed in U.S. District Court in Oakland, California, in February, Derek L. Mobley blamed Pleasanton, California-based Workday Inc.’s AI systems and screening tools for his failure to find employment despite applying for at least 100 positions since 2018.

Mr. Mobley charged that the tools administered by Workday and used by employers rely upon “subjective practices” that have had a disparate impact on applicants who are African-American, older than 40, and/or disabled.

There will be more lawsuits, experts say.

“Wrongful employment decisions and, in particular, claims around discrimination are in the offing,” said Kelly Thoerig, Marsh LLC’s New York-based U.S. employment practices liability product leader.

She said that while there has not been a notable volume of AI-related employment claims to date, “the framework is there” for a creative plaintiffs bar.

Observers say employers will generally be held responsible for any perceived discrimination.

“It’s incumbent upon these companies to not just rely on the vendor that supplies the AI program,” Ms. Mullin said.

“It’s going to come down to having a very diverse and strong set of data,” said David Derigiotis, Detroit-based chief insurance officer for insurtech Embroker. “It has to be tested and tested and must be reported and the results found to be satisfactory before live production,” he said.

Employment practices liability policies will likely provide coverage if there is litigation, observers say.

“What insureds might want to look at is what kind of computer-related exclusions could be on the policy, and they need to talk with their broker to make sure that any wrongful claim that arises from AI is covered under the definition of wrongful employment, including claims and settlements,” Ms. Mullin said.

Technology errors and omissions coverage may also come into play, Mr. Derigiotis said.

“We have seen some questions around employers’ utilization of AI and AI-related technologies come up with more regularity” during underwriting, but generally speaking ‘’it hasn’t created any outsize problems,” Ms. Thoerig said.

Lawmakers, regulators set rules on using Ai as a recruitment tool

Artificial intelligence’s use in employment decisions has drawn the attention of federal, state and local regulators and legislators.

This includes:

- The Equal Employment Opportunity Commission in May issued guidance on how to avoid discrimination under Title VII of the Civil Rights Act of 1964 when using AI.

- During a June 13 hearing on AI, U.S. Democratic Sen. Jon Ossoff, of Georgia, chairman of the Senate Subcommittee on Human Rights & the Law, said AI’s potential impact on work could include fundamental shifts in recruitment, candidate screening and hiring.

- A New York City law, Local Law 144, which took effect July 5, requires employers and employment agencies that use AI to conduct a bias audit within one year of its use, make information about the audit publicly available, and provide employees or job candidates with certain notices about its use.

- Illinois’ Artificial Intelligence Video Interview Act, which became effective Jan. 1, 2020, regulates AI’s use in job applicant video interviews.

- Maryland’s Facial Recognition Services Law, which became effective Oct. 1, 2020, prohibits employers from using facial recognition technology during pre-employment job interviews without the applicant’s consent.

In addition, the European Union has passed draft rules governing AI’s use for functions including making hiring decisions. No comparable U.S. federal legislation is imminent.

Europe is taking a more unified approach than the United States, said Ernest Paskey, Washington-based partner and head of assessment solutions, North America, for Aon PLC.

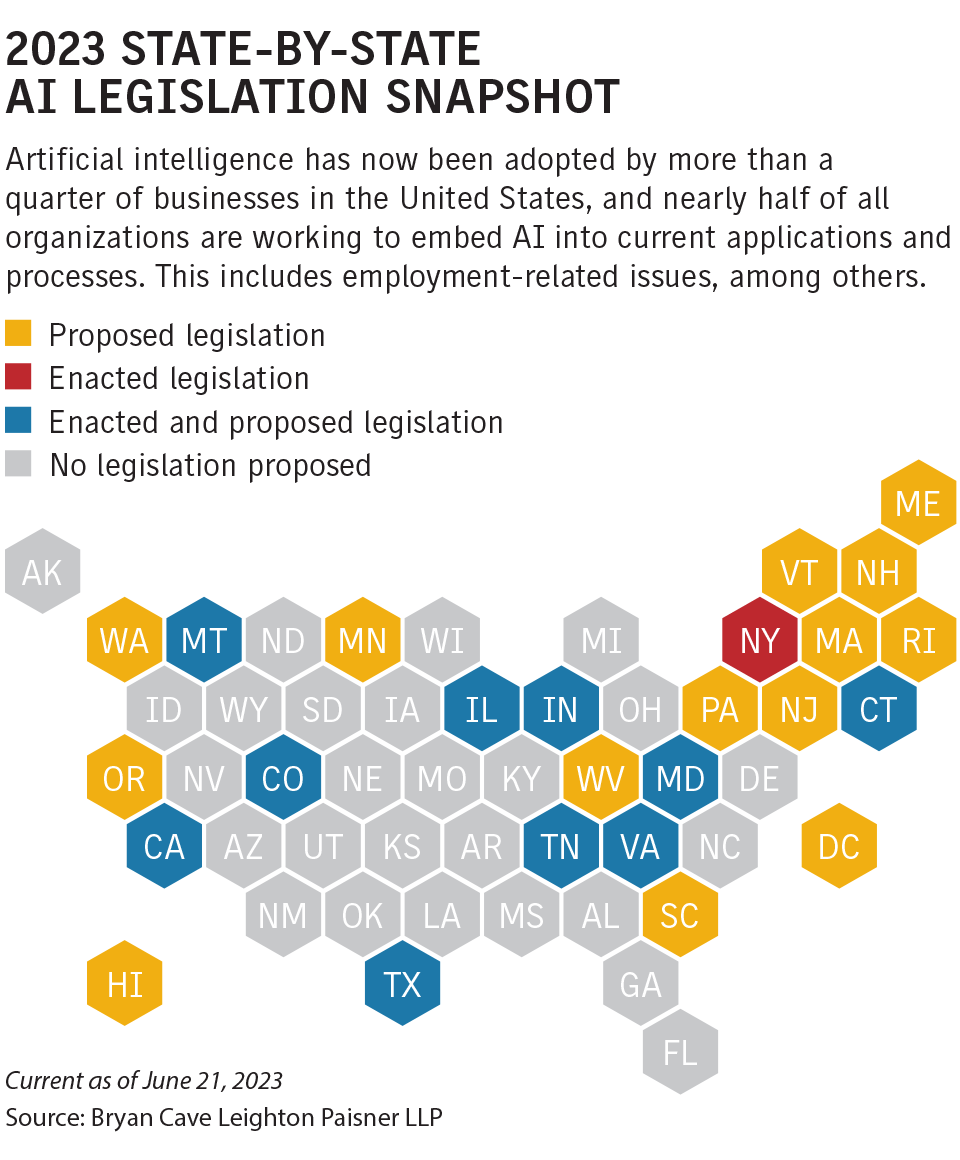

AI-related uses have also been the focus of many state legislatures (see chart).

Some of the proposed legislation pinpoints hiring, while other measures are framed more broadly, addressing issues such as housing or credit, said Michael Fetzer, Brunswick, Georgia-based associate partner, global science and analytics, for Aon.